The digital landscape of 2026 has reached a tipping point. For the last decade, the mantra was “Cloud First.” We pushed every byte of data, every customer interaction, and every analytical process to massive, centralized data centers. But as artificial intelligence has evolved from simple chatbots to complex autonomous agents, the limitations of the cloud—latency, bandwidth costs, and privacy concerns—have become impossible to ignore.

At Sitara Innovations, we are witnessing the dawn of the Edge AI in 2026 Era. This isn’t just a technical shift; it is a fundamental restructuring of how businesses interact with data. By moving intelligence to the “Edge”—the literal boundary where the physical world meets the digital one—companies are achieving levels of performance that were previously a pipe dream.

Chapter 1: Understanding Edge AI in 2026 and the Death of “Cloud-Only” Thinking

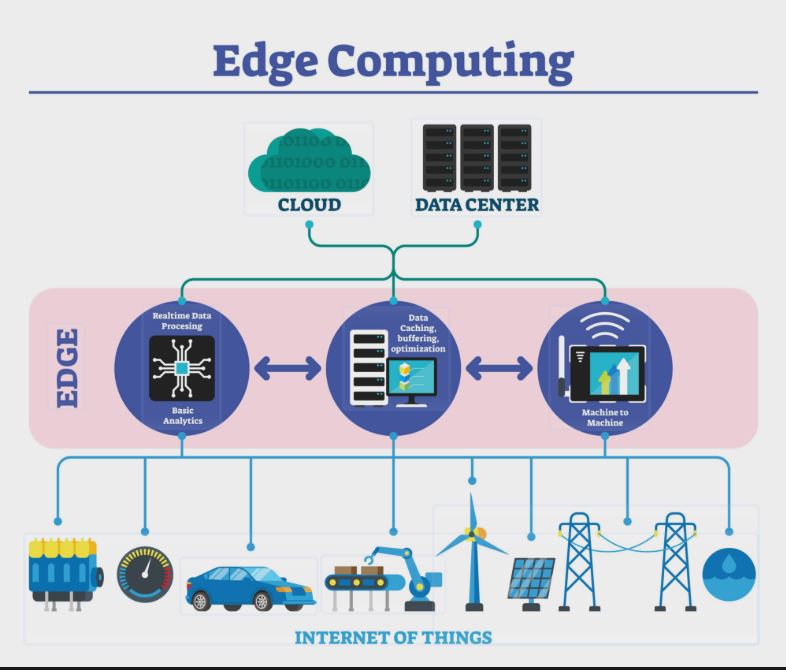

To understand why Edge AI is the most critical tech trend of 2026, we first have to look at the “latency tax.” In 2024, if a smart camera in a warehouse saw a safety hazard, it had to send that video feed to a server thousands of miles away, wait for an AI to process it, and then send a signal back to stop the machinery. That round trip could take seconds. In a high-speed industrial environment, seconds are the difference between a minor glitch and a catastrophic accident.

Edge AI eliminates the round trip. By deploying specialized hardware and “Micro LLMs” directly onto local devices, the “thinking” happens where the “seeing” happens.

The Three Pillars of Edge AI Architecture

-

Local Inference: The AI model lives on the device (or a local gateway), not in a far-off data center.

-

Data Sovereignty: Sensitive information is processed and discarded (or anonymized) locally, never crossing the public internet.

-

Real-Time Execution: Response times drop from seconds to milliseconds ($<10ms$), enabling true real-time automation.

Chapter 2: The Breakthrough of 2026—Micro LLMs and Model Distillation

The biggest technical hurdle for Edge AI used to be size. You couldn’t fit a trillion-parameter model like GPT-4 onto a thermostat or a local store controller. However, 2026 has introduced Model Distillation and Quantization techniques that have changed the game.

What are Micro LLMs?

Micro LLMs are highly specialized, compact language models. Instead of being a “jack of all trades,” a Micro LLM is trained to be a master of one specific domain—such as medical equipment troubleshooting, inventory management, or legal document parsing. source

-

Efficiency: These models can run on hardware as simple as a modern smartphone chip or an industrial IoT gateway.

-

Performance: Because they are specialized, they often outperform giant general-purpose models within their specific niche.

-

Energy Consumption: Running AI at the edge is significantly more sustainable, reducing the carbon footprint associated with massive cooling systems in hyper-scale data centers.

Chapter 3: Industry Disruptions—Who Wins with Edge AI?

At sitarainnovations.com, we analyze how these technologies translate into bottom-line growth. In 2026, three sectors are leading the charge:

1. Healthcare and Medical Technology

Privacy is the primary barrier to AI adoption in healthcare. With Edge AI, patient data—such as high-resolution MRI scans or real-time vitals—can be analyzed by an AI at the bedside.

-

The Benefit: Instant diagnostics without the data ever leaving the hospital’s secure local network.

-

The Sitara Edge: For our partners at HK Medical, this means building catalogs and support systems that function with zero-latency, even in environments with intermittent internet connectivity.

2. Retail and Hyper-Personalization

E-commerce is no longer just about a website; it’s about the “Phygital” (Physical + Digital) experience.

-

Smart Shelves: Edge AI-enabled cameras can track inventory levels and detect “dwell time” (how long a customer looks at a product).

-

Local Processing: This data is processed locally to trigger an instant discount on a nearby digital screen, powered by your Shopify or WooCommerce backend, without the privacy risk of sending facial data to the cloud.

3. Industrial IoT and Manufacturing

We call this “Predictive Maintenance 2.0.” In a factory setting, every millisecond of downtime costs thousands of dollars. Edge AI allows sensors to hear the “acoustic signature” of a failing bearing and shut down the machine before it breaks.

Chapter 4: The Lean Team Dynamics of 2026

One of the most profound impacts of Edge AI is how it enables Lean Team Dynamics. At Sitara Innovations, we’ve proven that a high-efficiency two-person unit can now outperform a ten-person team from 2023.

How? By deploying local AI agents that handle the “heavy lifting” of data monitoring and basic decision-making. This allows human talent to focus on high-level strategy and creative problem-solving. When your infrastructure is “intelligent at the edge,” your human team doesn’t have to be bogged down by monitoring dashboards. The edge monitors itself.

Chapter 5: Technical Implementation—How to Migrate to the Edge

If you are managing a platform like AllthingsBNB or a corporate site on sitarainnovations.com, the migration to Edge AI involves three strategic steps:

Step 1: The “Intelligence Audit”

Not everything needs to be at the edge.

-

Edge Candidates: Tasks requiring high speed, high privacy, or high reliability (e.g., security alerts, real-time UX adjustments).

-

Cloud Candidates: Tasks requiring massive historical data analysis or long-term storage (e.g., yearly financial forecasting).

Step 2: API-First Integration

Your web development stack must be modular. Whether you are moving from WooCommerce to Shopify or building a custom B2B portal, your system must be able to “talk” to local AI gateways via lightweight APIs.

Step 3: Hardware Orchestration

Implementing Edge AI requires a synergy between software and hardware. This involves selecting the right “Edge Nodes”—devices capable of running quantized models—and ensuring they are synchronized with your central management system.

Chapter 6: Overcoming the Challenges of Edge AI

No technology is a silver bullet. While Edge AI offers incredible benefits, 2026 has highlighted specific hurdles:

-

Hardware Diversity: Managing thousands of edge devices is more complex than managing one cloud server.

-

Security at the Edge: While data privacy is higher, the physical devices themselves must be hardened against tampering.

-

Model Drift: Local models need a way to “check-in” with the cloud occasionally to receive updates and prevent their intelligence from becoming outdated.

Conclusion: The Future is Distributed

As we navigate the complexities of the 2026 tech landscape, one thing is clear: the future of business is distributed. The companies that will thrive are those that recognize intelligence isn’t a “place” you visit in the cloud—it’s a resource that should be present at every touchpoint of your business.

At Sitara Innovations, we don’t just build websites; we build intelligent ecosystems. From Shopify migrations to Edge AI integration, we ensure your digital presence is fast, secure, and future-proof.

Is your business ready to move to the edge? Explore our Digital Strategy Services at sitarainnovations.com